Understanding a Legend, From Pre-Z to the Present: Welcome to ‘The IBM Z Experience’

Joe Gulla follows up on 'z/OS and Friends' with a new series, setting out to understand the impact of the mainframe through a lens of innovation, realization and transformation

It’s been about three months since I concluded the yearlong technical exploration of IBM Z enterprise systems that was “z/OS and Friends.” With that project, my goal was to better understand the mainframe by examining it layer by layer. My aim was to be as comprehensive as possible, but to fully convey the platform’s legendary impact and continued predominance in enterprise computing, I still have much ground to cover.

This article marks the start of a new series exploring IBM Z—let’s call it “The IBM Z Experience.” But instead of scrutinizing the system at the component level, I will take a step back and attempt to explain the mainframe’s legendary status through a lens of innovation, realization and transformation.

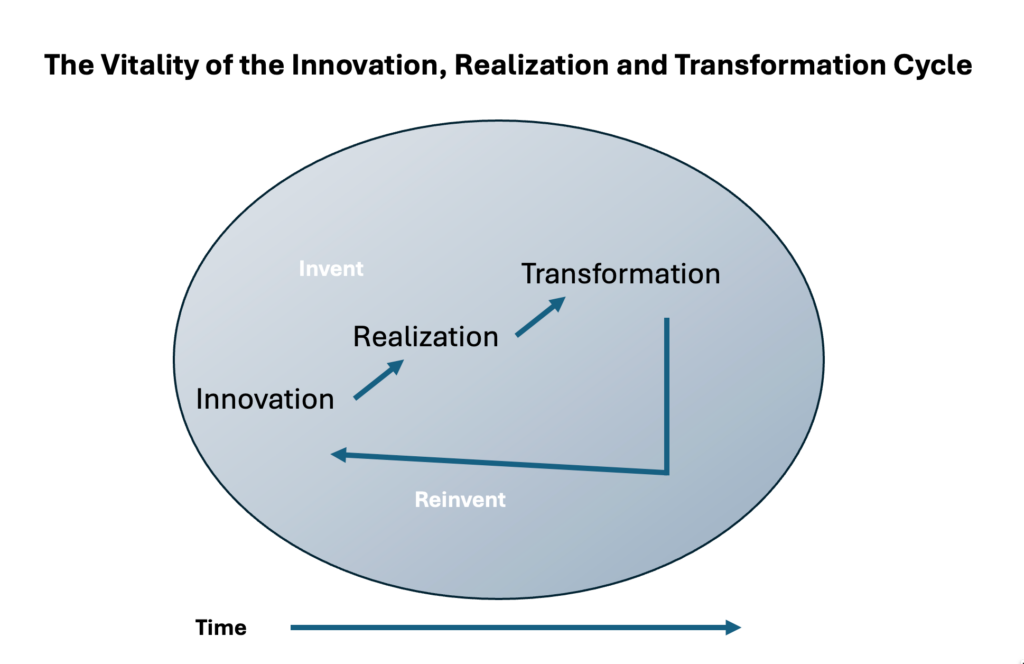

Why choose those three words? Because these powerful nouns support a sequence of actions—invent it, see it used, then watch as it changes an experience.

- Innovation is about the action or process of invention which describes what IBM has been doing since the mid 1960s when S/360, the first mainframe, entered the marketplace.

- Realizationis about the full recognition of enterprise computing, which is extensively used by innovative organizations of diverse characteristics.

- Transformation is about a dramatic change in form and function which describes the ongoing growth and change of IBM Z, as well as the impact of those systems on the establishments that deploy them.

When effective, they form a cycle as shown in Figure 1. For IBM mainframes, from S/360 to Z, the cycle has reiterated, repeatedly, with increasingly impressive results. These three ways of viewing IBM Z will guide this series.

Figure 1. The IRT cycle.

How Will Things Be Organized for This Series?

IBM Z is an awesome and challenging system to explore because it has so many dimensions, and each domain is deeply detailed and often reflects a high degree of specialization. Understanding that, organizing this series is a big challenge, here is my straightforward plan. I will write multiple articles in each of the six areas below:

- Hardware and Virtualization

- Software and OSes

- People and Processes

- Data and Integration

- Transformation and Integration

- Special Topics

You can quickly read that the focus will have a 360-degree concentration on IBM Z software, people, procedures and data. Let me jump right into the first topic in the category of hardware and virtualization. My concentration is on the development of IBM Z hardware from mainframes to hybrid cloud enablers, as well as many other stages in its continuum which have had staying power. This article is the first of four that will focus on this category.

IBM Z Hardware: Developed Over Decades

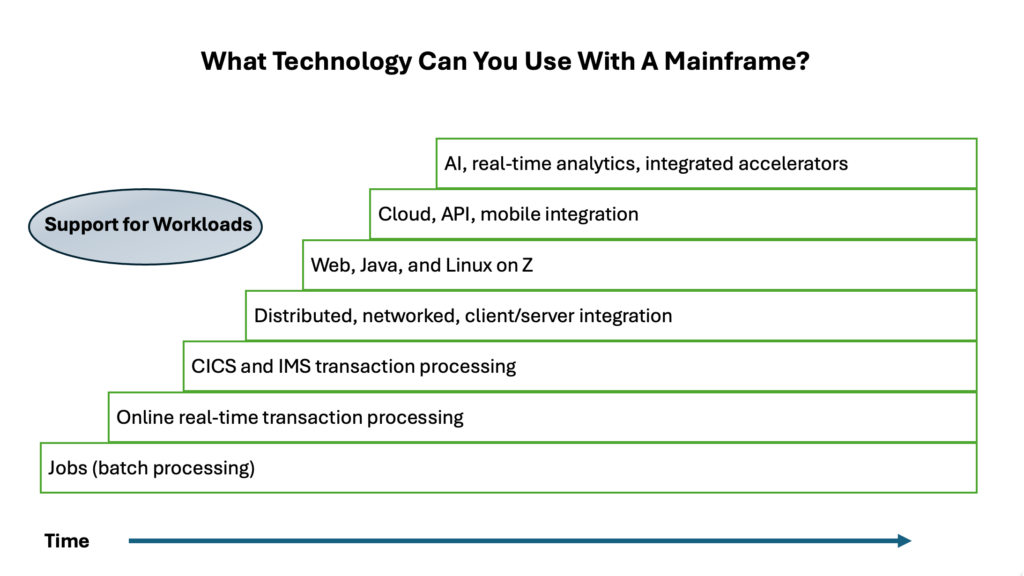

System Z has been transformed by IBM engineers based on many worthwhile contributions. The changes are driven by client needs, innovation possibilities from new technology and changes in the IT marketplace. You can understand the transformation as a sequence of changes that have accumulated over decades, the new functions and support added to the previous, which is carefully maintained to support ongoing compatibility.

Batch Was the Original Workload

The transformation that has taken place over decades started with batch processing. Computer batch processing mirrored the steps that were previously done manually by people. Mainframe batch processing is faster than humans can perform and less error prone. Many of us who started in IT in the 1970s were making this happen—no reengineering, just automate the current manual processes.

Next came online real-time transaction processing, which happened even before there were formal network protocols like BTAM and TCAM. I saw this in my first job at ConRail visiting the operations center in the rail yards that were using intelligent typewriters, electromechanical terminals built on the Selectric typewriter, as network devices linked to the mainframe over leased lines.

CICS and IMS Led to an Explosion of Online Systems

Then came CICS and IMS transaction processing followed by distributed, networked client/server integration. CICS and IMS facilitated an explosion of online systems because they made it so much easier to create online applications. Those application environments are loaded with functionality waiting to be utilized like Basic mapping support and message formatting services. The phrase “loaded with functionality” is a huge understatement. Next, distributed computing expanded the role of the mainframe to include a broad range of roles with other networked computers.

New Software and Workloads Added to System Z Usefulness

The transformation continued with a focus on the web, Java and Linux on Z. Later, cloud, API and mobile integration brought new kinds of support from IBM. I remember when Java was the new thing at the IBM Research Triangle Park (RTP) Networking Lab. Programmers were learning it by creating any application use they could dream up, like conference room scheduling, just to see what they could do with it.

I didn’t realize at the time that its main feature was “write once, run anywhere,” which was a tremendous benefit for applications that run on various hardware platforms. The Java JVM is the interface between the application and the platform. Creating that powerful go-between was a brilliant idea.

The Modern API Is a New(ish) Asset

IT specialists of a certain age remember APIs as interfaces between software entities largely focused on system and application management. Here is a systems management security example of the old type of API.

Today’s APIs are applications implemented as programs that need to be tested and managed just like application programs were in the past. API programs are mainly focused on interfaces to systems and existing applications, but they do so from the position of a persistent software asset. In short, a program.

Figure 2. Workload Integration and Adoption

Recently, AI, real-time analytics and integrated accelerators have been the focus driven by client demand. Let’s explore this in more detail by addressing the meaning of this development at the beginning.

The Early Era Established the Foundations of IBM Z

Introduced in 1964, IBM’s System/360 represented a dramatic departure from previous computing models and is widely regarded as the birth of the modern mainframe. Its most important breakthrough was the introduction of a unified, general‑purpose architecture that could span a wide range of performance characteristics, from small business systems to large scientific machines. Right from the beginning, IBM 360 had a robust business and scientific instruction set of over 140 instructions.

Ongoing Compatibility Was There From the Start

This concept of architectural compatibility across an entire product line was unprecedented, enabling customers to invest in software and gain experience without fear that their systems would become obsolete. System/360 also standardized peripheral interfaces, formalized byte‑addressable memory, and emphasized reliability and transactional integrity. These are principles that would define enterprise computing for decades. Importantly, System/360 marked a shift from specialized, single‑purpose machines toward scalable, multipurpose platforms capable of supporting business and scientific workloads under the weight of one architectural standard.

System/370 and Virtual Memory Pave the Way for VM/370

System/370, launched in 1970, evolved the System/360 line by delivering improved performance and expanded memory capabilities. Critically, it was the first hardware‑based support for virtual memory. With the addition of Dynamic Address Translation (DAT), the 370 family enabled multiple concurrent and isolated address spaces, laying the groundwork for practical timesharing and early virtualization.

When I was a new programmer, TSO and ISPF were the tools we used to do our work. At first, I didn’t realize that my TSO session was in its own address space and all the people around me had theirs too, giving us flexibility and independence.

IBM soon took these ideas to the next level with the introduction of the Virtual Machine Facility/370 (VM/370). This product provided full system virtualization and allowed multiple OSes to run simultaneously on a single processor.

VM/370 proved both forward‑looking and remarkably durable. When I worked in the IBM RTP lab, my team made extensive use of VM/370. Looking back on it now, without it, how could we have done the necessary testing of system automation products without being able to IPL different OS images at different software levels?

System 390 Launched a Renewed OS

By the time System/390 arrived in the 1990s, virtualization, I/O channel sophistication and system reliability had become rock-solid design pillars. System/390 modernized the architecture with expanded addressing, ESA/390 enhancements and improved reliability features, while still fully supporting its architectural heritage.

Pillars of Backward Compatibility, Reliability and Resilience Now Well Established

The System/360, 370 and 390 generations collectively established several strategic design principles that continue to define mainframe engineering.

First, backward compatibility ensured that investments in applications, data and operational skills could be preserved across hardware generations. This philosophy made possible decades‑long continuity for enterprises with mission‑critical workloads.

Second, reliability and resilience were engineered into every layer of the system. This extended from I/O channel independence and error‑checking memory to redundancy, failover mechanisms and strong workload isolation.

The Birth of IBM Z—Architecture, Reliability and Security

The introduction of IBM’s Z series in 2000 marked a decisive transition toward a computing philosophy centered on near‑continuous availability. This is what IBM characterized as a “zero downtime” operational model.

Building on the legacy of System/390, Z series systems such as the z900 and later the z990 emphasized nondisruptive upgrades, dynamic capacity additions and the ability to run mixed workloads without requiring scheduled outages. We system programmers could use the MVS SET command to make a PARMLIB change without requiring an IPL. The small difference made a big impact—remember, outages can be both planned and unplanned.

Reliability, Availability and Serviceability (RAS) Supported by New Robust Features

The architecture formalized the concept of 24x7x365 availability as a baseline requirement for mission‑critical environments, reflecting rising demands from banking, government, healthcare and e‑commerce. With Z series, downtime became something to design out of the system rather than plan around.

Reliability, Availability, and Serviceability (RAS) took a major step forward in the Z series era through extensive hardware‑based protections and isolation mechanisms. Features like pervasive error-correcting code (ECC) memory, fault‑tolerant cache structures, self‑healing process technology and predictive failure analysis allowed the system to detect, contain and recover from faults without interrupting running workloads. Redundant paths for power, cooling and I/O ensured that failures rarely propagated beyond their containment zones.

Advancements in the Security Discipline

Z systems also advanced the state of enterprise security by integrating hardware cryptographic coprocessors, including the Crypto Express family, which provide tamper‑resistant, FIPS‑certified key generation and high‑performance cryptographic operations. These dedicated modules handle symmetric and asymmetric operations, secure key storage and digital signature processing, offloading intensive operations from the general processors.

Secure key management, which is rooted in hardware‑protected master keys and isolation domains, ensures that critical cryptographic material remains inaccessible even to privileged system software. This hardware‑anchored approach to trust laid the foundation for the mainframe’s role in regulated, security‑sensitive industries.

Specialized Engines—Linux and Java Given a Boost

The evolution of processor types in zSeries introduced specialized engines designed to optimize workload distribution and reduce software licensing costs. Integrated Facility for Linux (IFLs) enables Linux workloads to run on IBM Z and LinuxONE systems without consuming general central processor (CP) capacity.

The original zAAP specialty engine for Java processing has been retired, and zAAP‑eligible workloads are now executed on z Integrated Information Processor (zIIP) engines. zIIPs support a broad and expanding class of workloads, including Java, Db2 data access, analytics, XML processing, networking services and selected middleware and ISV workloads. This allows enterprises to reduce CP utilization and software licensing costs while expanding software support and their related workloads on the mainframe.

Together, these specialty engines expanded the platform’s flexibility, encouraged workload consolidation and provided a strategic mechanism for balancing performance with operational cost efficiency.

Table 1. Active Specialized Engines by Workload

| Specialized Engine | Workload |

| IFLs | Linux on IBM Z & LinuxONE |

| zIIP | Java, Db2 data access, analytics, XML processing, networking services and selected middleware and ISV workloads |

CPs are not specialized to any workload and can run any authorized workload. Workloads executed on CPs contribute to the software licensing costs (MIPS/MSU rating) of the mainframe.

zEnterprise Support for the Hybrid Computing Shift

The introduction of the Integrated Facility for Linux (IFL) transformed the mainframe into a leading consolidation platform for enterprise Linux workloads. By offering a cost‑efficient, virtualization‑ready engine dedicated to Linux, IBM made it possible for organizations to migrate distributed server farms onto a single, highly resilient IBM Z platform.

Thousands of Simultaneous Linux Instances

Adoption grew rapidly as customers realized the advantages of IFL, which included exceptional density through PR/SM and z/VM virtualization, simplified management and the ability to run thousands of Linux instances with predictable performance. Over time, IFLs have become a cornerstone of hybrid mainframe strategies, supporting everything from large-scale web serving to modern containerized applications.

Modern IBM Z Designs Make an Important Impact

Starting with z13 in 2015, IBM emphasized scale‑out Linux, pervasive encryption and analytics acceleration. This focus continued with z14 in 2017, as encryption everywhere was introduced at no performance penalty, though the it might more accurately be called “invisible but not free.”

With z15 in 2019, the design focus expanded to data mobility and resilience, enabling live data access across on‑premises and cloud environments while maintaining mainframe‑grade consistency and availability. With these z15 capabilities, data-oriented protection travels with the information, regardless of where it is stored or shared.

Security, AI and Hybrid Cloud Get Additional Focus

From z16 in 2022 through z17 in 2024 and 2025, IBM Z designs centered on zero‑trust security, AI at the core and hybrid cloud operations. z16 introduced on‑chip AI inference acceleration for real‑time fraud detection and operational analytics directly in transaction flows, while extending quantum‑safe cryptography planning and confidential computing capabilities.

z17 continued down this course with higher performance cores, expanded AI acceleration and deeper alignment with Red Hat OpenShift, Kubernetes and API‑driven integration. This positions the platform as a resilient partner in enterprise hybrid architectures. The result is up-to-date IBM Z thinking:

- Keep mission‑critical data and transactions on Z.

- Expose them securely via APIs and events.

- Integrate in a flexible way with cloud and distributed platforms.

More About IBM Z in the Hybrid Cloud Era

With hybrid cloud, IBM Z acts as a secure environment for enterprise workloads across IBM Cloud, Red Hat OpenShift and broader multicloud environments. OpenShift provides a consistent Kubernetes layer across on‑premises Z systems and public clouds, letting teams deploy the same containerized application to z/OS, Linux on Z or off‑platform without refactoring.

IBM Cloud Services—including API Connect, MQ on Cloud, DataPower and cloud‑based DevOps tooling—integrate well with IBM Z applications. The services share mainframe data through APIs while preserving on‑platform security and performance. For enterprises implementing multicloud strategies, IBM Z becomes the trusted core in a distributed architecture. Applications and analytics can run wherever needed, while IMB Z ensures the integrity, availability and transactional consistency required for banking, insurance, healthcare and government workloads.

Workload Modernization Driven by Hardware Evolution

IBM System Z’s hardware evolution—from expanded core counts (208 in z17) to advanced cache hierarchies and AI‑ready processors—has transformed the platform from a pure OLTP powerhouse into a hybrid engine capable of simultaneously supporting transactional integrity, real‑time analytics and cloud‑native microservices. It has been a remarkable journey.

Applications of Record and New Workloads Both Benefit

Traditional COBOL and PL/I OLTP workloads still run with unparalleled throughput and reliability. New Linux‑on‑Z environments, container orchestration and high‑bandwidth I/O channels allow organizations to run Spark analysis, fraud‑scoring models, API layers and event streams in parallel alongside system‑of‑record transactions (you know, legacy).

The result is a platform where legacy and modern workloads coexist on the same machine, enabling low‑latency insights and agile service delivery without the need for off‑platform data movement.

Enterprises Gain New Uses

In banking, IBM Z enables real‑time fraud detection, ISO 20022 transaction handling and cloud‑enabled payment gateways. It does this while maintaining the security and auditability demanded by regulators. Healthcare organizations leverage encrypted data processing, high‑volume claims handling and secure API exposure to integrate with digital front‑ends and telehealth platforms.

Governments depend on Z for high‑assurance identity systems, benefits distribution, logistics tracking and continuous cyber‑monitoring. Global retailers use the platform for omnichannel order processing, supply‑chain analytics and loyalty-platform integration. Across all these industries, hardware‑driven innovation ensures that mission‑critical workloads remain fast, compliant and cloud‑connected.

The Takeaway?

Modern IBM Z systems continue to play an important role in large‑scale enterprise systems. Their hardware and OS operation supports sustained high‑volume transaction processing, strong isolation and security controls. IBM Z availability achieves results on the order of 99.999999%. These systems are widely deployed in sectors such as banking, insurance and retail, where consistent throughput, fault tolerance and predictable performance characteristics remain operational requirements.

Surveys show that 75% of global IT leaders rate mainframes equal to or better than cloud in total cost of ownership, underscoring IBM Z’s continued economic and operational importance. Its newest generation, specifically the z17, integrates on‑chip AI acceleration and quantum‑safe cryptography, enabling real‑time inferencing, pervasive encryption and secure processing directly where mission‑critical data resides. These are capabilities that cloud platforms find hard to match.

The next article in the series will cover virtualization on IBM Z in the form of z/VM, KVM and PR/SM). The scope will include architecture, use cases and performance.